How We Replaced Our Search Engine With a Cache

We killed MeiliSearch and moved search entirely to the browser. It's faster, simpler, and free.

We used to run MeiliSearch for full-text search across Blue. It indexed hundreds of gigabytes of records from our MySQL database, kept (or tried to keep) itself in sync, and gave users instant search results across their workspaces.

Then we built a custom frontend cache for performance reasons — and realized we didn’t need a search engine anymore.

Today, every search in Blue happens entirely in the browser. No server round-trip. No search index to sync. No separate service to operate. A dedicated Web Worker runs Fuse.js against records already sitting in memory, and returns results in milliseconds.

This post is about how we got there, what was wrong with the “proper” approach, and why your cache might be your best search index too.

The problem with MeiliSearch

MeiliSearch is a good piece of software. For small datasets with infrequent updates, it’s genuinely delightful — fast to set up, fast to query, great typo tolerance out of the box. Our problems started when we tried to run it at scale for a production SaaS serving thousands of companies.

Sync was the first crack. MeiliSearch has no native database sync. There’s no built-in CDC (Change Data Capture), no official MySQL connector — nothing like Elasticsearch’s Logstash or Debezium ecosystem. You’re on your own. We used a community-maintained sync tool that read from MySQL binlog, but it was fragile. The index would fall behind for hours during high-write periods. Users would create a record, search for it, and get nothing. That’s a trust-destroying experience.

Memory was the second. MeiliSearch uses LMDB under the hood, which memory-maps the entire index. In practice, it consumes as much RAM as the OS makes available. The --max-indexing-memory flag? Frequently ignored. We hit OOM kills regularly. In Docker, it would read the host’s total memory instead of the container limit, then try to use two-thirds of it. For a database our size, the storage amplification alone was significant — a 10MB source document becomes roughly 200MB of indexed storage.

Stability was the third. Under load, MeiliSearch would sometimes stop responding entirely. Not slow — unresponsive. CPU pinned at 100%, no API responses. We saw reports of the same pattern from others running it at scale: services becoming unavailable multiple times a day, task database corruption during upgrades, the index freezing during large document ingestion.

All of this on a three-person engineering team. Every hour spent babysitting a search service was an hour not spent building the product. And the kicker: MeiliSearch’s open-source edition is single-node only. No replication, no failover, no horizontal scaling. If the node goes down, search goes down.

We tolerated it because search felt like a problem that required a search engine. That assumption turned out to be wrong.

The cache changed everything

When we rebuilt our frontend data layer, the goal was performance — instant workspace switching, sub-100ms loads from IndexedDB, background sync via Web Workers. Search wasn’t part of the plan.

But once the cache was working, we noticed something obvious: every record in a user’s workspace was already sitting in memory. Titles, descriptions, custom field values, tags, assignees — all normalized, all indexed by workspace, all instantly accessible. The data MeiliSearch was indexing from MySQL was the same data the browser already had.

The question stopped being “how do we make MeiliSearch work better” and became “why are we running MeiliSearch at all?”

Building search in days, not months

The actual implementation took days. The hard work — building the cache, the Web Worker infrastructure, the phased loading system — was already done. Search was just a new consumer of data that already existed.

The architecture is simple. A dedicated search Web Worker holds a Fuse.js index. As records load into the cache (during both initial fetch and background sync), they’re simultaneously fed to the search worker. The worker accepts four message types: init, add, remove, and search. Results come back with match metadata for highlighting.

Here’s the Fuse.js configuration:

const fuse = new Fuse(records, {

keys: ['title', 'todoList.title', 'description', 'fields.value'],

threshold: 0.2,

ignoreLocation: true,

includeMatches: true,

minMatchCharLength: 3,

})threshold: 0.2 keeps results tight — close matches only, no noise. ignoreLocation: true means a match anywhere in the string counts, not just the beginning. We search across record titles, descriptions, the workspace name, and custom field values.

Each record is normalized into a search-specific shape before indexing:

interface SearchRecord {

id: string

title: string

description?: string

fields?: { name: string; value: string }[]

todoList: {

id: string

title: string

project?: { id: string; name: string; slug: string }

}

}Custom field extraction handles every searchable field type — text, email, phone, URL, numbers, select fields, countries, locations, references. Non-searchable types like dates, checkboxes, and files are excluded. This extraction runs twice: once during Phase 1 (core record fields) and again during Phase 2 (enriched custom field data), so users can search before all data has loaded and get progressively better results.

The search UI

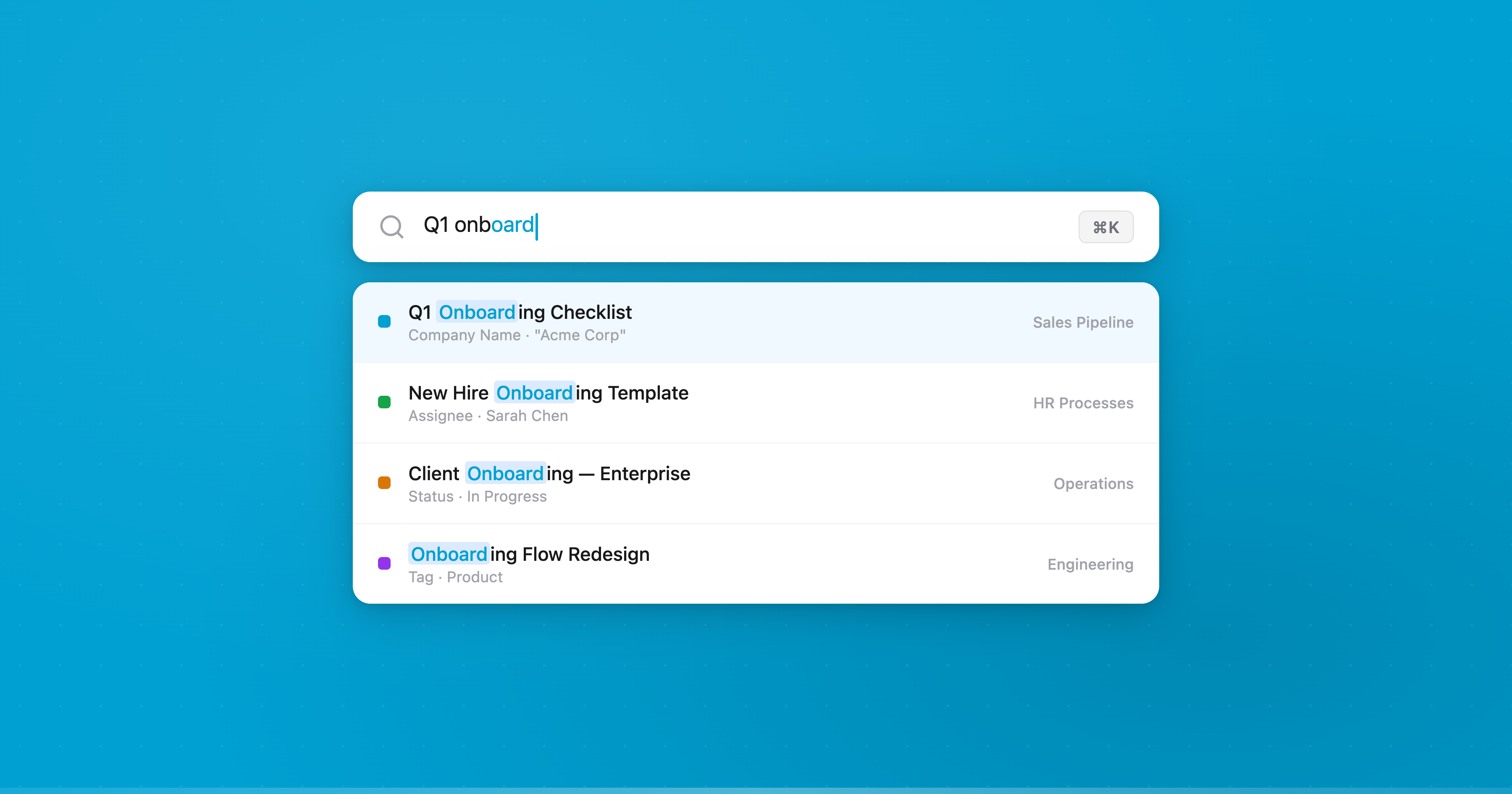

Global search is triggered with Cmd+K. It searches across five layers simultaneously:

- Records — fuzzy search via the Web Worker, with match highlighting and context snippets

- Workspaces — fuzzy match on workspace names

- Organizations — fuzzy match across all orgs the user belongs to

- Navigation — settings, accounts, shortcuts, theme options

- Quick links — docs, API, notifications (shown when the search field is empty)

Results show which field matched and a snippet of the surrounding context. If you search “acme” and it matches a custom field called “Company Name” on a record titled “Q1 Review”, you see the record title, the field name, and the highlighted match — all computed client-side from match metadata Fuse.js returns.

Keyboard navigation works the way you’d expect: arrow keys to move, Enter to open, Escape to close. The first result is auto-selected on every keystroke.

Duplicate detection for free

Once the search index existed, duplicate detection was trivial. When a user types a new record title, we run it against every record in the current workspace:

const fuse = new Fuse(records, {

keys: ['title'],

threshold: 0.4,

includeScore: true,

ignoreLocation: true,

})A higher threshold (0.4 vs 0.2) catches looser matches. Fuse returns a score from 0 (perfect match) to 1 (no match), which we invert to a percentage: a score of 0.3 becomes “70% similar.” If matches are found, the user sees them before creating the record — with options to open the existing record, create anyway, or cancel.

This runs in real-time as they type. No server call. No separate service. Just the cache doing double duty.

What we gained

Speed. Search results appear as you type, in the same frame. No network latency. No waiting for an index to be queried. The Fuse.js worker returns results in single-digit milliseconds for workspaces with tens of thousands of records.

Reliability. There is no sync lag because there is no sync. The search index is the cache. When a record is created, it’s in the index immediately — not after a binlog consumer processes it and MeiliSearch ingests it.

Simplicity. We eliminated an entire service from our infrastructure. No MeiliSearch server to provision, monitor, restart after OOM kills, or upgrade carefully to avoid task database corruption. No sync pipeline to maintain. No secondary storage eating hundreds of gigabytes of disk.

Cost. MeiliSearch was running on its own server. That server is gone. The search computation happens on the user’s device, distributed across every browser that opens Blue. The most efficient infrastructure is the infrastructure you don’t run.

Features. Duplicate detection, instant filtering, client-side views — none of these were practical with a server-round-trip model. When the full dataset lives in memory, new features that query it are nearly free to build.

When this doesn’t work

To be clear: this approach works because of how Blue’s data is structured. Each workspace is a self-contained unit. A user only ever searches within workspaces they have access to, and the cache already holds all records for those workspaces. If you’re building a search experience across millions of documents that no single user would ever load entirely — a marketplace, a social feed, a document search engine — you need server-side search. That’s what Elasticsearch and MeiliSearch are for.

But for SaaS products where each customer’s data fits in the browser’s memory? The question is worth asking: do you actually need a search service, or do you already have the data?

The meta-lesson

The conventional wisdom is that search is a hard problem requiring specialized infrastructure. And it can be. But we spent months operating a separate search service with sync pipelines, memory tuning, and monitoring — when the answer was already sitting in the browser’s memory.

In the age of AI, prototyping alternatives is cheap. We used Claude Code to build the initial search worker, iterate on the Fuse.js configuration, and wire up the field extraction logic. The whole thing was working in days. A year ago, the “safe” choice would have been to stick with MeiliSearch and keep fighting it. Today, the cost of trying the simple thing first is so low that there’s no reason not to.

Sometimes the best solution isn’t the proper one. Sometimes it’s the obvious one that was already there.

— Manny